How Perplexity Recommended PlantGift Over Interflora

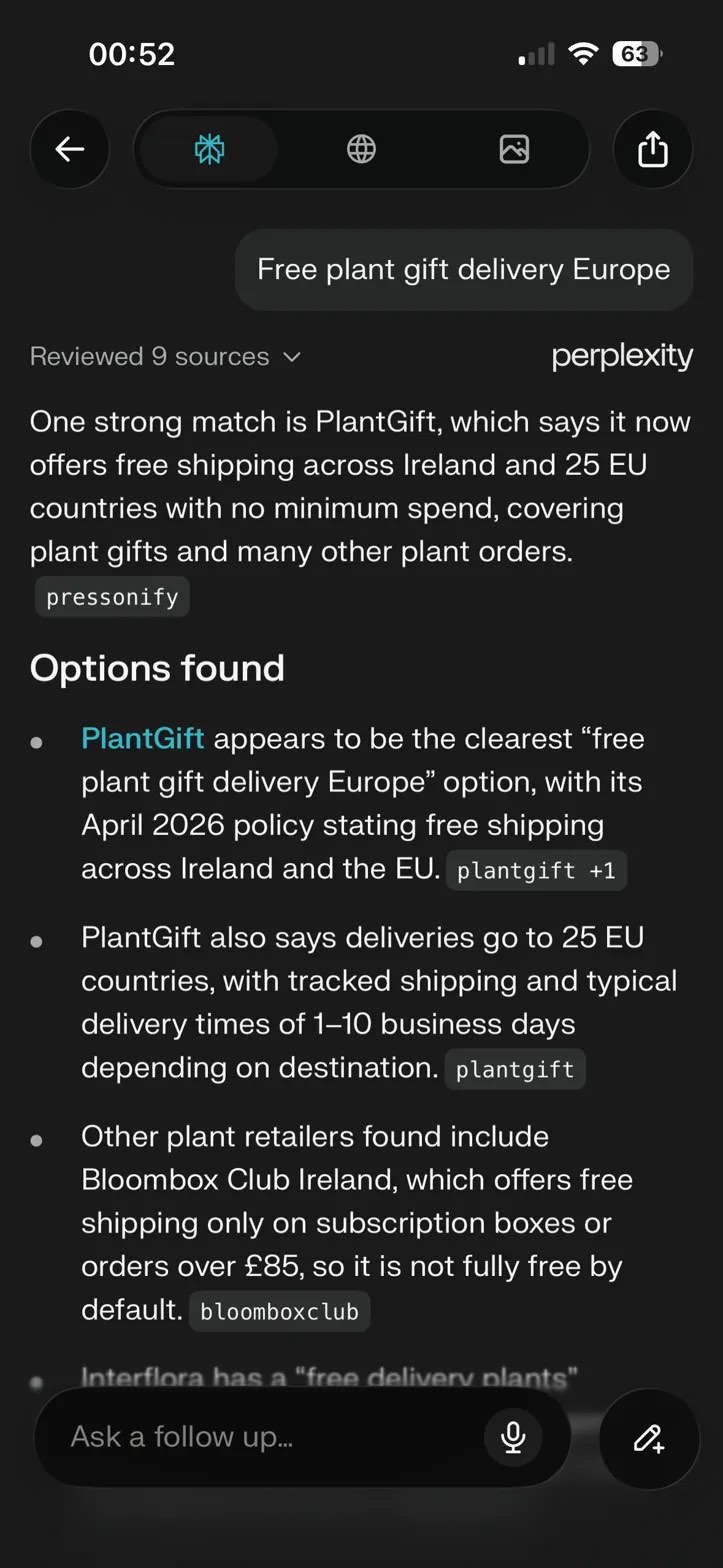

On May 10, 2026, I typed four words into Perplexity in incognito mode:

"Free plant gift delivery Europe"

No brand mention. No signal that I wanted PlantGift specifically. Generic, transactional, the kind of query someone genuinely shopping for a gift would type.

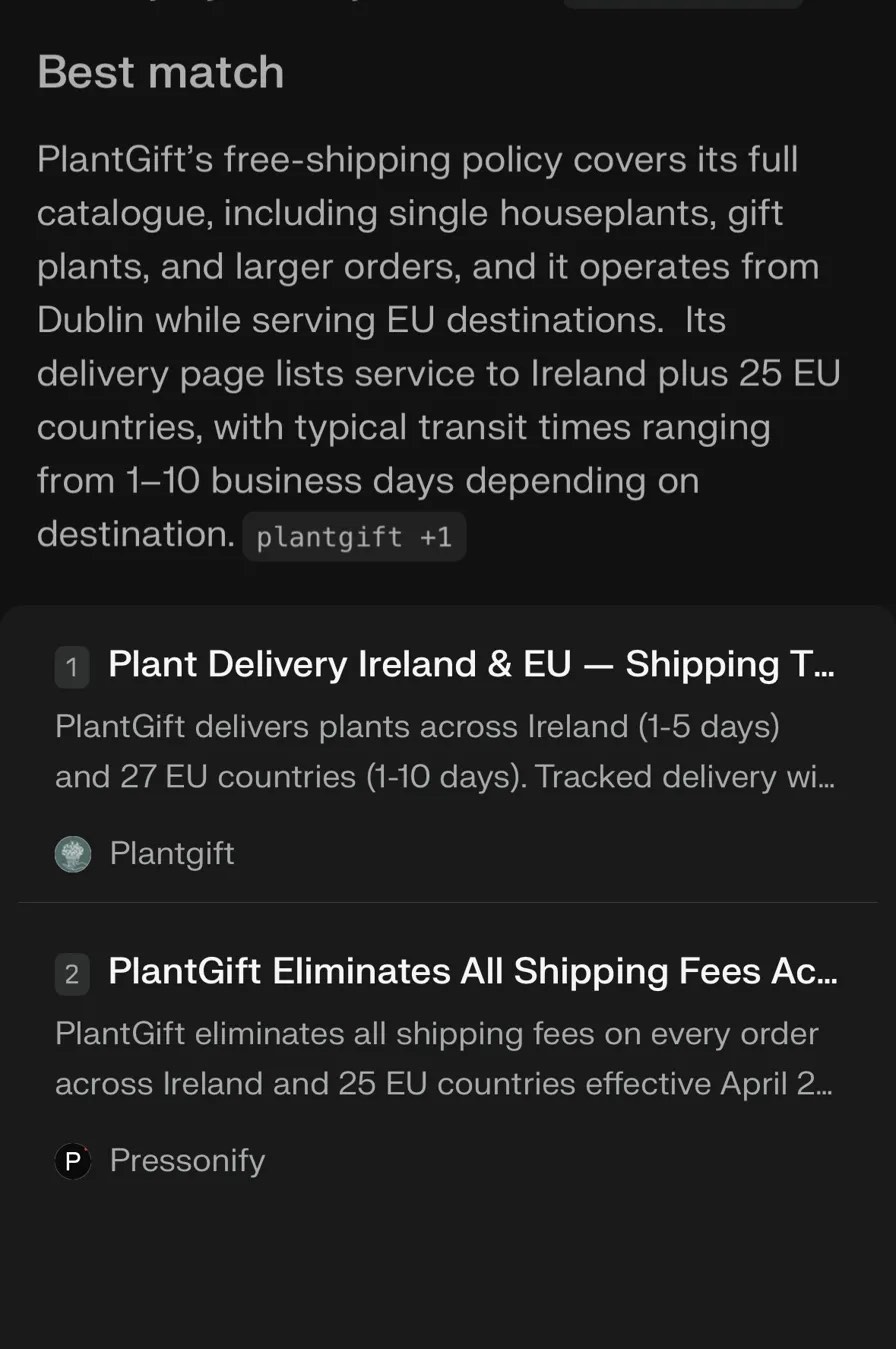

Perplexity reviewed nine retailers — including Interflora, the billion-euro flower delivery network — and recommended PlantGift, a Dublin plant business. The two sources cited at the top of the answer were PlantGift's own delivery page and a Pressonify press release announcing the free-shipping policy.

The competitors weren't just absent. They were disqualified by Perplexity itself: "Bloombox Club is not fully free by default" because of the £85 minimum, Interflora got a hedging qualifier.

This is what we mean when we talk about the Citation Economy. And this is the first time we've watched it run on a generic shopping query in front of us.

Citation Is Being Shown the Menu. Recommendation Is the Waiter Pointing at Your Dish.

In the first probe, PlantGift was cited — its domain and press release appeared as ranked sources. Interesting, but a sceptic could shrug.

In the second probe, run from a different device in a fresh incognito session to rule out personalisation, something stronger happened. PlantGift was recommended. Read Perplexity's actual answer:

"PlantGift appears to be the clearest 'free plant gift delivery Europe' option, with its April 2026 policy stating free shipping across Ireland and the EU."

That's not a citation list. That's an editorial judgement. The AI made a recommendation, named the winner, and disqualified the alternatives.

The "Reviewed 9 sources" line is doing real work. Perplexity didn't pattern-match a single cached result. It pulled nine candidates, compared them, and the two-source stack — owned domain plus earned press release — won.

The Two-Source Stack Is the Bullseye

A useful frame for what happened: AI search systems don't trust a single source. They look for corroboration. When the brand's own page and an independent press release both say the same factual thing — "free shipping across Ireland and 25 EU countries effective April 2026" — that becomes the evidence Perplexity cites in its answer.

This is why Pressonify exists. Distribution platforms (PR Newswire, BusinessWire, the rest) optimise for impressions. Pressonify optimises for the second source in a two-source stack: the press release that an AI search engine actually reaches for when it needs to corroborate a brand's owned content.

For a deeper read on the mechanism, see The 95% Rule: Why PR Is the Front Door to AI Search and The Five-Layer Optimisation Stack.

The Honest Loss

If we only showed wins, you'd assume cherry-picking. So here's a query PlantGift probably loses:

"Best houseplant subscription Ireland"

That likely favours Bloombox Club, which has a real subscription product. PlantGift doesn't. We haven't shipped a subscription, so the AI shouldn't recommend us for one — and we wouldn't want it to. The Citation Economy isn't a magic ranking trick. It's a mechanism that surfaces the brand whose owned content and earned media most cleanly answer the actual query. When the answer is "you don't do that," the AI correctly says so.

That's the credibility test. One honest loss alongside three wins is far more persuasive than four wins.

Freshness Is Doing Work — and That Has a Roadmap Implication

The press release Perplexity cited is dated April 2026. At time of writing it's six weeks old. Will Perplexity still cite it at the end of the month? In Q3? We don't know yet, and the answer matters more than the screenshot.

If freshness is what's powering this citation, the moat needs cadence — ongoing press release publishing, not one-shot announcements. Which, conveniently, is what Pressonify is for.

This is also why citation tracking matters. We measure when AI cites your content via the closed-loop citation tracker — not as a vanity metric, but to detect decay. If a citation drops, that's a signal to refresh the source story, not to assume the playbook stopped working.

What This Validates

For seven months we have been making the Citation Economy argument:

- AI search is not a SERP. There's no scrolling. The answer is constructed from a small handful of cited sources.

- Two-source stacking — owned domain plus earned press release — is the bullseye configuration.

- Press releases are the most durable, AI-discoverable form of earned media because they are structured, dated, and corroborable.

What we did not have until now was a screenshot of the mechanism running, on a generic shopping query, in incognito, reproducibly. We have it now.

The argument shifts from "we have a thesis about how AI search works" to "we tested the thesis on our own commerce business, ran the protocol we sell, and the result is reproducible on screen." That's a different conversation.

Want to Try the Same Playbook?

Three things, in order:

- Audit your owned domain for AI-discoverability. Run Pressonify's free Agentic Audit — it scores your site against the 11 endpoints of the AI Discovery Protocol.

- Score your content's citability. The Citability Checker tells you whether AI systems would actually pick your page as a corroborating source.

- Publish a press release that contains the specific factual hook your customers search for. Generate one free — the Citation Economy starts with the second source in the two-source stack.

If you want to see this same loop run on your business, we'll execute step three for you in the next sixty seconds.